Hyper-SD: Revolutionizing Image Synthesis with Trajectory Segmented Consistency Models

What is Hyper-SD: Revolutionizing Image Synthesis with Trajectory Segmented Consistency Models

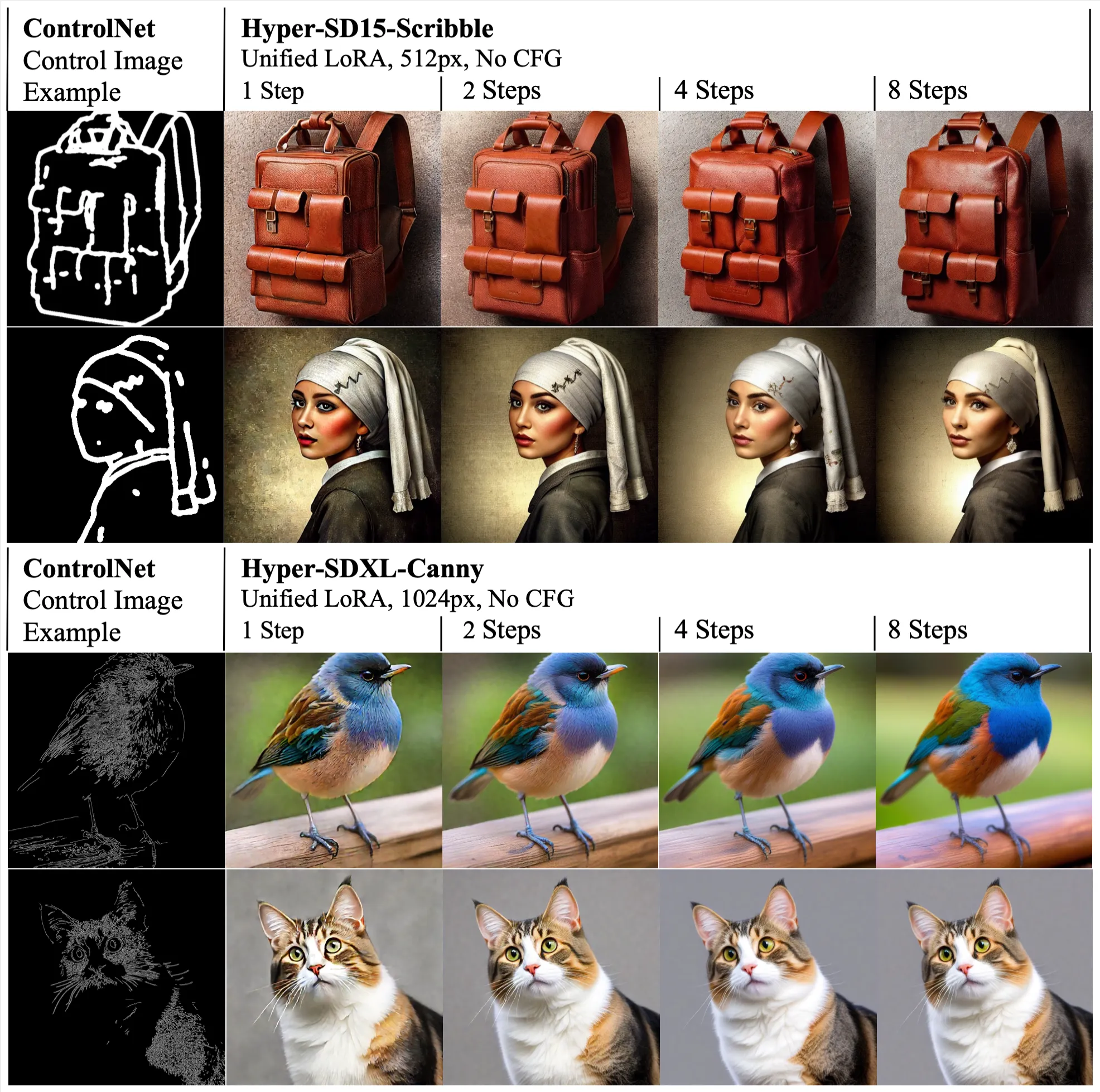

Hyper-Sd is a method that makes image generation with Stable Diffusion much faster while keeping quality high. It can create strong results in as few as 1 to 8 steps, so you see final images very quickly.

It does this by training a small add-on (a LoRA) in a smart, step-by-step way. The team mixes several training tricks so the final output stays sharp and faithful to your prompt.

Hyper-SD: Revolutionizing Image Synthesis with Trajectory Segmented Consistency Models Overview

Here is a quick look at the project and what it offers.

| Item | Details |

|---|---|

| Project name | Hyper-SD |

| Type | Speed-up method for Stable Diffusion via LoRA |

| Purpose | High-quality images in 1–8 steps with low delay |

| Works with | SDXL and SD 1.5 |

| Key ideas | Trajectory Segmented Consistency Distillation, human feedback learning, score distillation, one LoRA for all steps |

| Outputs | Images from text prompts, fast drafts, quick iterations |

| Demo | Real-Time Generation Demo of Hyper-SD |

| Website | https://hyper-sd.github.io/ |

| Noted metrics | SDXL 1-step: +0.68 CLIP Score and +0.51 Aes Score over SDXL-Lightning |

| Best for | Real-time previews, quick design cycles, lower compute settings |

To see a short note from our team, check our Hyper‑SD insights here: read the quick take.

Hyper-SD: Revolutionizing Image Synthesis with Trajectory Segmented Consistency Models Key Features

- Fast results in 1–8 steps. You can get a finished image in a blink with very few steps.

- One LoRA for all steps. The same add-on works across different step counts.

- Works on SDXL and SD 1.5. It supports two popular Stable Diffusion families.

- Smart training in segments. It keeps the original image path steady while training.

- Human feedback learning. The model is tuned to match what people prefer.

- Score distillation add-on. This gives an extra boost for low-step quality.

Read More: ByteDance research updates

Hyper-SD: Revolutionizing Image Synthesis with Trajectory Segmented Consistency Models Use Cases

- Creative work with fast drafts. Try more ideas in less time.

- Real-time product mockups. Update text and see changes right away.

- A/B testing prompts. Compare prompts with quick turnarounds.

- Low compute settings. Fewer steps help on modest GPUs or edge devices.

- Live demos and shows. Smooth on-stage runs with short wait times.

How It Works

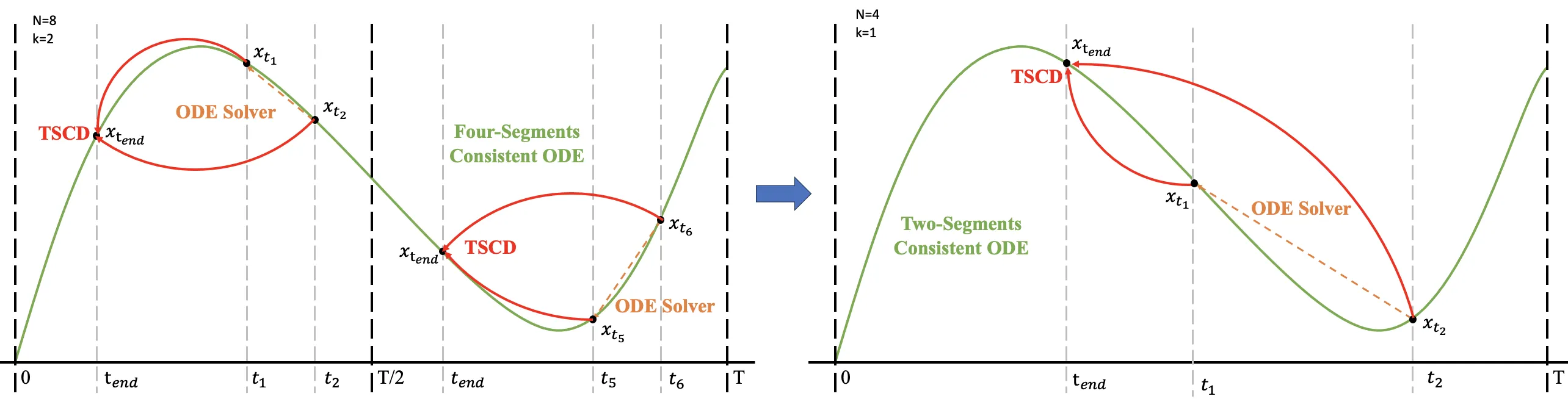

Think of image generation as a path from noise to a clear picture. Hyper-SD learns that path in chunks of time, not all at once, so it stays faithful to the original model’s way of drawing the image.

First, it trains in small time segments to keep the main path stable. Then it adds human feedback to fix weak spots in very low steps. Finally, it adds score distillation so the LoRA stays strong when you run 1–8 steps.

In the end, you get a single LoRA that you can load and run at any step count you want. This keeps speed and quality steady, and it keeps prompts behaving as you expect.

Read More: Visit our homepage

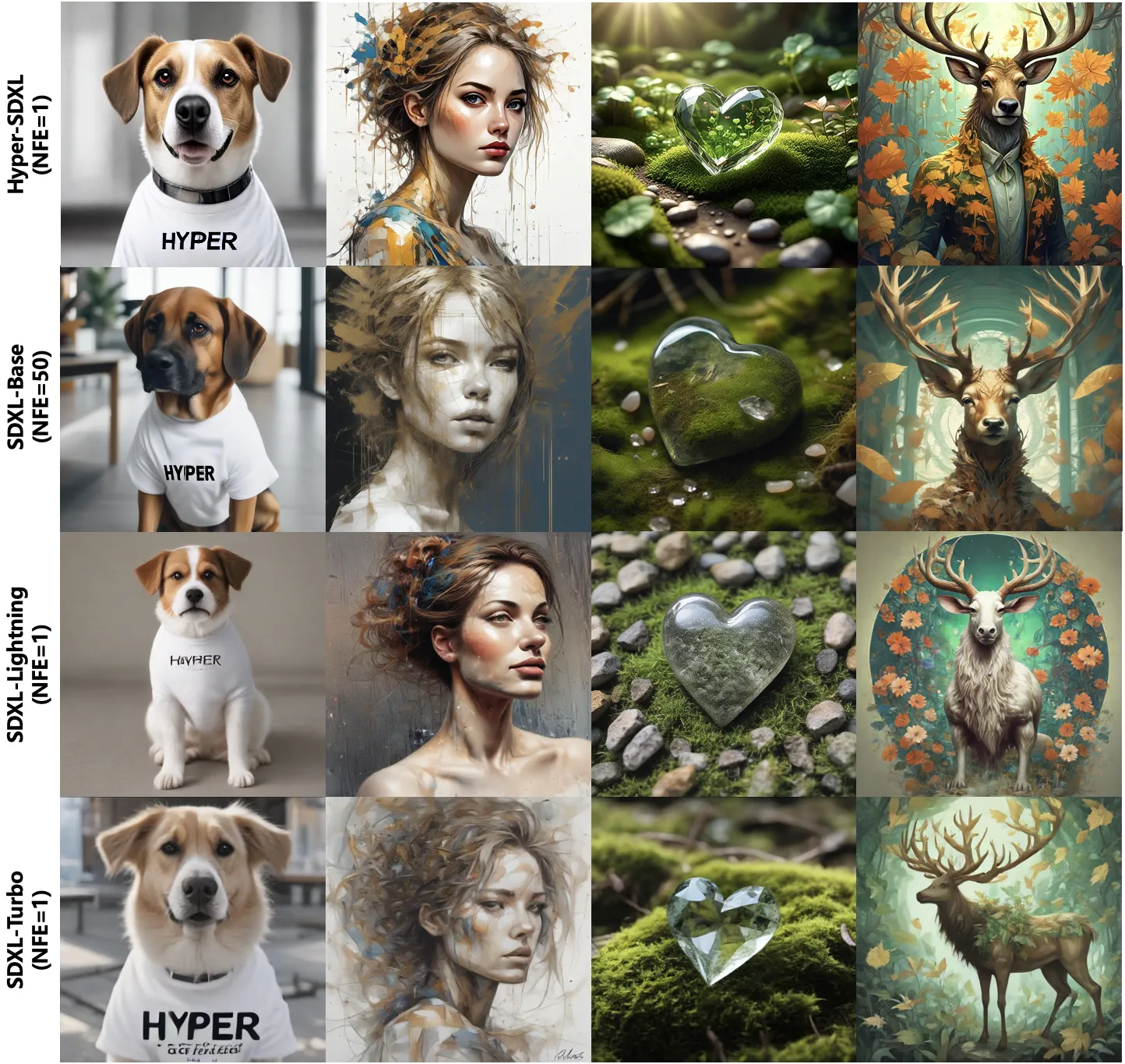

Performance & Showcases

Hyper-SD reaches top results from 1 to 8 steps on SDXL and SD 1.5. In tests, Hyper-SDXL beats SDXL-Lightning by +0.68 CLIP Score and +0.51 Aes Score in a single step.

Showcase 1 — fast prompt-to-image in real time Real-Time Generation Demo of Hyper-SD.

The Technology Behind It

Past speed-up methods often fall into two groups: keep the original path or change the path. Hyper-SD mixes both ideas. It keeps the path steady by training in time segments, and it boosts low-step skill with human feedback and score distillation.

- Trajectory Segmented Consistency Distillation: trains the student model in short time windows so it follows the teacher closely.

- Human feedback learning: guides the model toward user-preferred outputs, fixing color, composition, and prompt faithfulness issues in low steps.

- Score distillation: strengthens the student for very few steps so images stay clear and consistent.

Together, these parts give you fast runs with strong prompt alignment and pleasant looks.

Installation & Setup

Right now, the public site at https://hyper-sd.github.io/ is a project page with a demo and results. It does not list install commands or a training script in the scraped material here.

Here is how to get started today:

- Visit the project page to study examples and metrics.

- Watch the real-time demo above to see speed and quality.

- Check for links to LoRA files or releases on the project page as they become available.

If official setup steps or model files are posted later, follow them exactly as provided on the project page.

Why It Matters

Waiting many steps slows you down and costs more. By cutting steps to 1–8 while keeping quality high, Hyper-SD shortens the loop from idea to image.

This means more tries per minute, faster reviews, and less compute cost over time. Teams can move from prompt tests to final art much faster.

Tips for Better Results

- Start with 4 steps for a balance of speed and detail, then adjust down or up.

- Keep prompts clear and focused. Short, direct prompts often work best at very low steps.

- If an image feels off at 1 step, try 2–4 steps before changing the prompt.

- Use negative prompts to control clutter or unwanted styles.

FAQ

What is Hyper-SD?

Hyper-SD is a LoRA-based method that speeds up Stable Diffusion, giving high-quality images in very few steps.

Which base models does it support?

It supports SDXL and SD 1.5.

How fast is it?

It is built for 1–8 inference steps, with solid quality even at 1 step.

Do I need a top-tier GPU?

Fewer steps help on modest hardware, so you can get good results on common GPUs. Exact speed depends on your setup.

Can I use my own prompts and styles?

Yes. Prompt as you normally do with SDXL or SD 1.5 and load the Hyper-SD LoRA when available.

Is the code public?

The project page shows results and a demo. Keep an eye on it for model files or setup notes.

Image source: Hyper-SD: Revolutionizing Image Synthesis with Trajectory Segmented Consistency Models