Beyond the Frame: Mastering Extreme Viewpoint 4D Video Synthesis with EX-4D

What is Beyond the Frame: ing Extreme Viewpoint 4D Video Synthesis with EX-4D

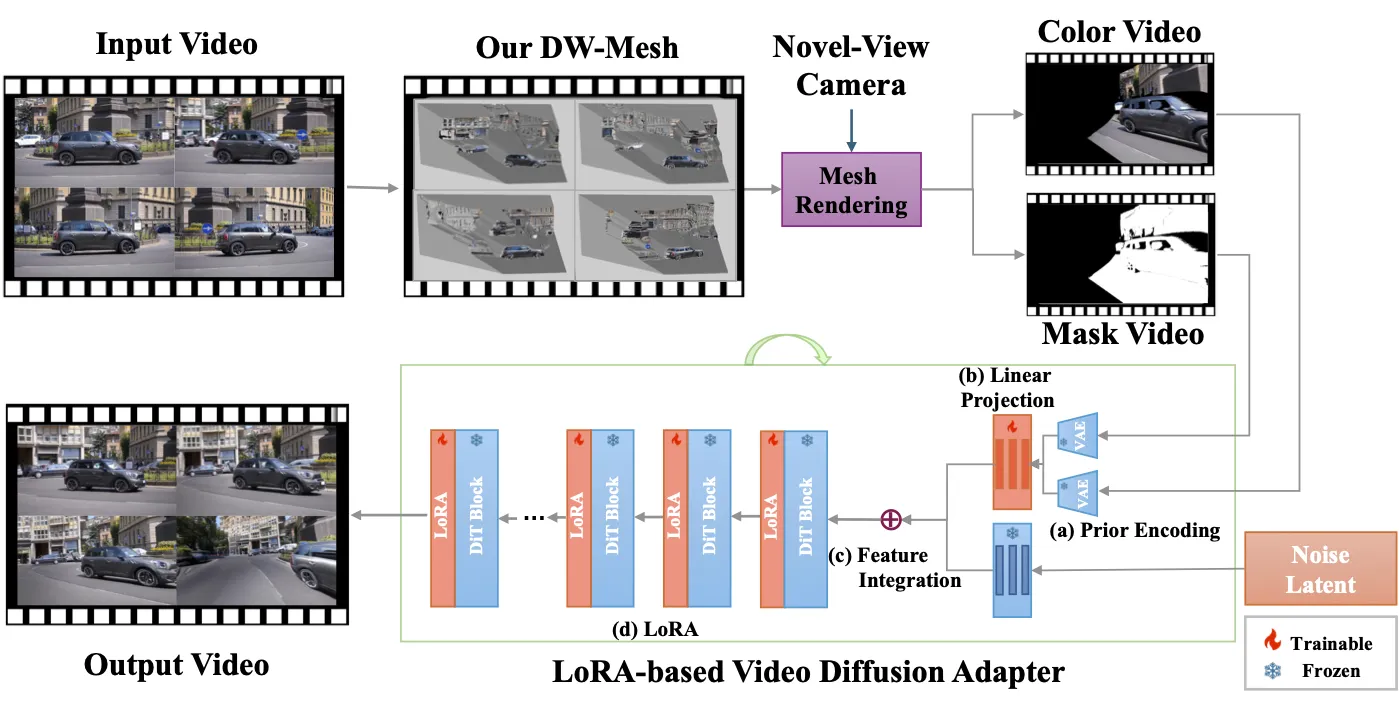

EX-4D is a research project that turns a normal, single-view video into a “moveable camera” video. You can pan, tilt, and swing the camera to very wide angles, from −90° to 90°, while keeping the scene steady and consistent. It does this by building a strong 3D guide from your video, then using a smart video generator to fill in the frames.

The team uses a special 3D shape called a Depth Watertight Mesh to handle both what you see and what is hidden in the scene. This helps keep lines straight, people and objects in the right place, and motion smooth when the camera view changes a lot.

Beyond the Frame: ing Extreme Viewpoint 4D Video Synthesis with EX-4D Overview

Here is a quick look at the project in one place.

| Item | Details |

|---|---|

| Project Name | EX-4D: EXtreme Viewpoint 4D Video Synthesis via Depth Watertight Mesh |

| Type | Research code + demo |

| Purpose | Turn a monocular (single-camera) video into a camera-controllable 4D video with extreme angles |

| Inputs | A normal video (monocular) |

| Outputs | A new video with camera moves: ±30°, ±60°, ±90°, 180, zoom in/out |

| Core Ideas | Depth Watertight Mesh, simulated masking for training, lightweight LoRA video diffusion adapter |

| Training Data | Built from monocular videos (no multi-view pairs needed) |

| Notable Strength | Holds geometry steady under extreme viewpoints |

| Model Footprint | About 1% trainable parameters (140M) of a 14B video diffusion backbone |

| Who It’s For | Film teams, creators, VR/AR builders, game teams, and tool makers |

| Code | https://github.com/tau-yihouxiang/EX-4D |

| Project Page | https://tau-yihouxiang.github.io/projects/EX-4D/EX-4D.html |

Read More: Our short EX‑4D notes

Beyond the Frame: ing Extreme Viewpoint 4D Video Synthesis with EX-4D Key Features

- Extreme angle control: Move the camera up to ±90° while keeping the scene stable.

- Strong geometry: A Depth Watertight Mesh models both seen and hidden parts of the scene.

- Light training head: Only about 1% of the big video model needs to be trained.

- No multi-view data: A smart masking trick builds training pairs from single videos.

- Results look steady over time: Frames connect well across the whole clip.

For related tech and creative workflows, see our quick take on AI video tools: text to video roundup.

Beyond the Frame: ing Extreme Viewpoint 4D Video Synthesis with EX-4D Use Cases

- Film and post: Create new camera angles without re-shooting.

- VR/AR: Build free-viewpoint clips from phone or camera footage.

- Games: Turn 2D footage into rich 3D-style scenes for trailers or cutscenes.

- Social content: Add big camera sweeps to short videos with one input clip.

- Space tours: Walk through rooms or scenes from many views for design and planning.

How It Works

EX-4D builds a Depth Watertight Mesh from your input video. This mesh covers what you see and also the parts you cannot see, which helps the model keep shape and scale right even at harsh angles.

Then a simulated masking tool makes training pairs from the video itself, so the system can learn how to fill in tricky areas. A small LoRA-based adapter connects this geometry to a strong, pre-trained video diffusion model, so you get high-quality frames with smooth motion.

Installation & Setup

Follow these steps exactly as shown.

Clone the repo and set up the environment:

# Clone the repository

git clone https://github.com/tau-yihouxiang/EX-4D.git

cd EX-4D

# Create conda environment

conda create -n ex4d python=3.10

conda activate ex4d

# Install PyTorch (2.x recommended)

pip install torch==2.4.1 torchvision==0.19.1 torchaudio==2.4.1 --index-url https://download.pytorch.org/whl/cu124

# Install Nvdiffrast

pip install git+https://github.com/NVlabs/nvdiffrast.git

# Install dependencies and diffsynth

pip install -e .

# Install depthcrafter for depth estimation. (Follow DepthCrafter's installing instruction for checkpoints preparation.)

git clone https://github.com/Tencent/DepthCrafter.git

Download the pretrained models:

huggingface-cli download Wan-AI/Wan2.1-I2V-14B-480P --local-dir ./models/Wan-AI

huggingface-cli download yihouxiang/EX-4D --local-dir ./models/EX-4D

Getting Results: Step-by-step

Step 1 — Reconstruct the DW-Mesh and export helper videos:

# --cam 180 (30 / 60 / 90 / zoom_in / zoom_out )

python recon.py --input_video examples/flower/input.mp4 --cam 180 --output_dir outputs/flower --save_mesh

Step 2 — Generate the EX-4D output video (needs about 48GB VRAM):

python generate.py --color_video outputs/flower/color_180.mp4 --mask_video outputs/flower/mask_180.mp4 --output_video outputs/flower/output.mp4

Tip: The DepthCrafter setup needs its own checkpoints. Follow its repo steps to prepare them before running EX-4D.

The Technology Behind It

- Depth Watertight Mesh: This packs the scene into a tight, leak-free 3D form. It includes both what is visible and what is hidden behind objects.

- Simulated masking: The team “hides” parts of frames during training to teach the model how to fill in missing areas.

- LoRA video adapter: A small learning head guides a large video model with geometry cues, keeping quality high while training fast.

Performance & Showcases

Showcase 1 — * Equal Contribution Heading: EX-4D: EXtreme Viewpoint 4D Video Synthesis via Depth Watertight Mesh | Label: * Equal Contribution. This clip highlights steady shape and motion under strong camera moves.

Showcase 2 — * Equal Contribution Heading: EX-4D: EXtreme Viewpoint 4D Video Synthesis via Depth Watertight Mesh | Label: * Equal Contribution. The scene holds up as the camera swings to very wide angles.

Showcase 3 — * Equal Contribution Heading: EX-4D: EXtreme Viewpoint 4D Video Synthesis via Depth Watertight Mesh | Label: * Equal Contribution. Details remain stable as the camera view changes.

Showcase 4 — * Equal Contribution Heading: EX-4D: EXtreme Viewpoint 4D Video Synthesis via Depth Watertight Mesh | Label: * Equal Contribution. Movement stays smooth across frames with big perspective shifts.

Showcase 5 — * Equal Contribution Heading: EX-4D: EXtreme Viewpoint 4D Video Synthesis via Depth Watertight Mesh | Label: * Equal Contribution. Geometry stays consistent even when parts of the scene go in and out of view.

Showcase 6 — * Equal Contribution Heading: EX-4D: EXtreme Viewpoint 4D Video Synthesis via Depth Watertight Mesh | Label: * Equal Contribution. The output keeps both texture and structure in sync under hard angles.

For a related production-ready path, see our short read on a strong video generator from the same space: Goku video generation project.

Notes, Limits, and Tips

- Depth quality matters: If the depth estimate is off, edges may wobble at very big angles.

- Heavy GPU use: High resolution and long clips can take a lot of compute and VRAM.

- Tricky materials: Mirrors, glass, and shiny items are still hard and may need extra care.

FAQ

What input do I need?

You need a single video file. Short, clear clips with steady motion and normal lighting tend to work best.

What hardware should I plan for?

The team notes that EX-4D generation can need about 48GB of GPU memory. If you have less, try shorter clips or lower settings.

Do I need multi-view or depth labels to train?

No. The project uses a simulated masking plan that learns from single-view videos. Depth is estimated with DepthCrafter as part of the setup.

Can I choose different camera paths?

Yes. The sample command shows presets like 30, 60, 90, 180, zoom_in, and zoom_out. You can switch the --cam flag to try different moves.

Image source: Beyond the Frame: ing Extreme Viewpoint 4D Video Synthesis with EX-4D