StereoWorld: Transforming 2D Videos into Immersive 3D Reality with Geometry-Aware Tech

What is StereoWorld: Transforming 2D Videos into Immersive 3D Reality with Geometry-Aware Tech

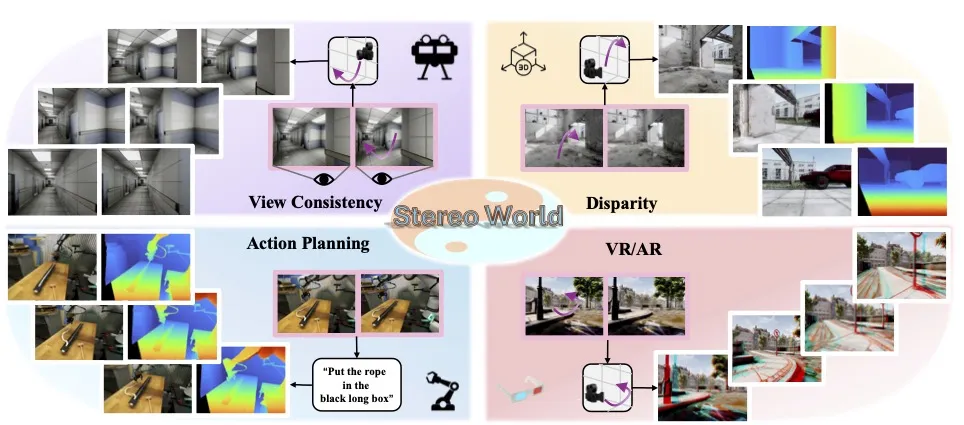

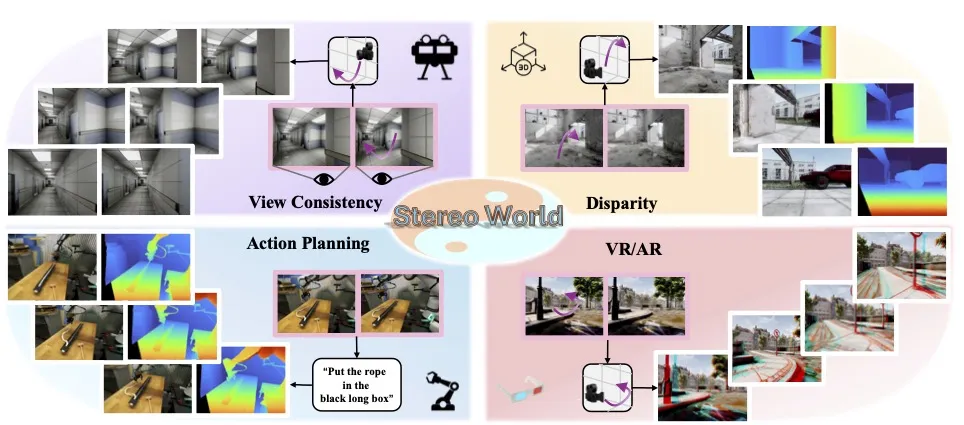

StereoWorld is a research project that turns plain 2D videos into rich 3D stereo videos. It uses camera guidance and geometry cues so each frame looks consistent from left and right views, giving you strong depth across time.

It starts from normal videos or binocular image pairs and produces a stereo video that feels stable and true to scene structure. The team shows it can also work for many views, not just two.

StereoWorld: Transforming 2D Videos into Immersive 3D Reality with Geometry-Aware Tech Overview

Here is a quick overview of what the project is, who it is for, and what you can expect.

| Item | Detail |

|---|---|

| Type | Research model and method for stereo video generation |

| Purpose | Turn 2D input into left–right stereo videos with strong, consistent depth |

| Inputs | Standard 2D video or binocular image pair |

| Outputs | Stereo video (left and right views), with frame-to-frame consistency |

| Core ideas | Camera-guided generation, geometry-aware design, Unified Camera-Frame RoPE, long video distillation via self-forcing |

| Multi-view | Method extends to multi-view video generation (works with the Wan video model) |

| Status | Code and model weights coming soon |

| Project site | https://sunyangtian.github.io/StereoWorld-web/ |

| GitHub | https://github.com/SunYangtian/StereoWorld |

| Authors | Sun Yang-Tian, Huang Zehuan, Niu Yifan, Ma Lin, Cao Yan-Pei, Ma Yuewen, Qi Xiaojuan |

| Year | 2026 (arXiv preprint) |

| Best for | Creators, VR/AR teams, filmmakers, researchers, educators |

| License | Not stated at the time of writing |

If you want a broader context on industry teams working in this area, check our short note on Bytedance.

StereoWorld: Transforming 2D Videos into Immersive 3D Reality with Geometry-Aware Tech Key Features

- Camera-guided stereo video generation: the model follows the camera setup to keep left and right views aligned over time.

- Strong depth cues: it uses stereo vision, the same depth cue humans use, to recover 3D structure from two views.

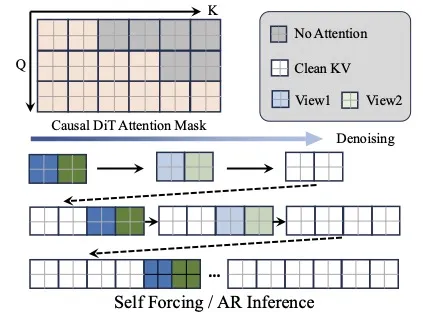

- Long video stability: a “self-forcing” process keeps depth steady across many frames.

- Multi-view ready: the same trick that works for stereo can extend to many camera views.

- Works with existing video models: the team shows it can be added to the Wan video model without extra training.

This image highlights the core goal: camera-guided stereo video generation that stays consistent through the whole clip. It shows how the model respects camera motion and scene geometry.

How StereoWorld Works (Plain-English Walkthrough)

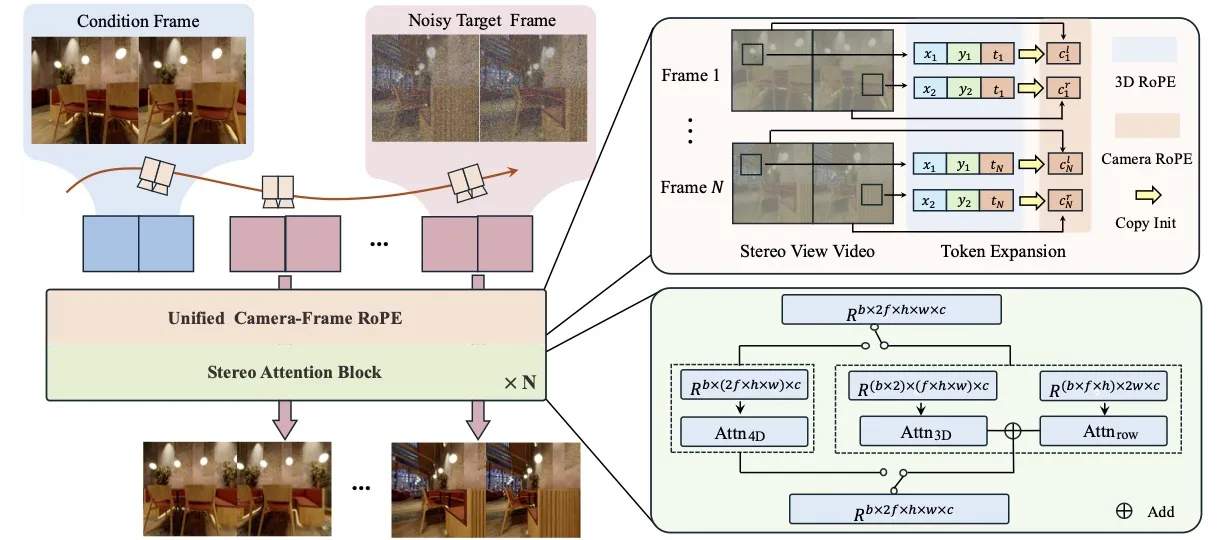

- Step 1: You start with a normal video or a pair of binocular images. These are the left and right inputs the model uses to sense depth.

- Step 2: The model reads camera information and encodes both camera direction and frame timing in a shared way (called Unified Camera-Frame RoPE).

- Step 3: It then produces stereo frames that match across time so the depth stays stable, even in long videos.

This pipeline image shows the flow from input frames to stereo outputs. The key point: the camera guides the process, helping the system keep geometry correct across the whole sequence.

Read More: Omnihuman 1.Com

The Technology Behind It (In Simple Terms)

- Unified Camera-Frame RoPE: a neat way to pack both camera pose and frame index into the model. This helps the model “know” where the camera is and when in time a frame belongs.

- Multi-view extension: the same idea that works for two views also works for many views. The team added it to the Wan model and got multi-view results without any extra training.

- Depth stability over time: the method reduces flicker and keeps left–right agreement across frames.

Long Videos Made Steady

Long videos can drift in depth across time. StereoWorld tackles this with a “self-forcing” setup that nudges the model to keep depth consistent.

Each sequence keeps stereo agreement from start to end. This gives you a smooth 3D feel across long shots.

This figure shows stereo video on the left and the matching depth estimate on the right. The attention mask helps the model keep focus where it matters during training.

StereoWorld: Transforming 2D Videos into Immersive 3D Reality with Geometry-Aware Tech Use Cases

- VR/AR content: turn a normal clip into stereo video ready for headsets.

- Film and TV: add stereo depth for 3D edits or re-releases of classic footage.

- Education: show depth in science clips so students can see shape and distance clearly.

- Research and labs: test multi-view ideas by plugging this method into a base video model.

- Archiving: upgrade old footage into stereo for new 3D experiences.

Installation & Setup

The team states that the code and model weights are in the final stage of release. There are no install commands yet.

To get ready:

- Watch the GitHub repo for updates: https://github.com/SunYangtian/StereoWorld

- Check the project site for new demos and notes: https://sunyangtian.github.io/StereoWorld-web/

- Prepare test videos you might want to convert once the release is out.

Performance & Showcases

Showcase 1 — Acknowledgements This short clip sits under “Acknowledgements” and confirms the project’s web template credit. The website template is borrowed from Nerfies.

Showcase 2 — Acknowledgements Another entry under “Acknowledgements” notes the same credit for the site layout. The website template is borrowed from Nerfies.

Showcase 3 — Stereo Video Generation This showcase highlights Stereo Video Generation, focusing on left–right consistency and stable depth. It shows how camera guidance helps keep both views in sync over time.

Showcase 4 — Stereo Video Generation Here, Stereo Video Generation is shown again with more scenes, making depth cues easy to see across moving frames. It underlines how the left and right streams agree.

Showcase 5 — Stereo Video Generation This Stereo Video Generation example shows steady geometry through different motions and textures. It keeps the two views lined up for a clear sense of depth.

Showcase 6 — Stereo Video Generation A final Stereo Video Generation clip shows stable results across varied shots. It keeps frames consistent across time and across the stereo pair.

Who Should Use StereoWorld?

Creators who want 3D depth from regular videos will find this useful. Teams building VR/AR apps can add stereo as a clear upgrade to their content. Researchers can test multi-view ideas by adding this method to a base video model.

If you want to know more about our editorial team and mission, see our About page.

Roadmap and What to Expect Next

- Public release: code and weights are marked “coming soon” on GitHub.

- More examples: expect more stereo and multi-view clips as the team refines the method.

- Wider model support: the Wan result hints that other video models could work too.

Quick Recap

StereoWorld turns 2D videos into stereo 3D with camera guidance and depth-aware design. It can also extend to many views and plug into existing video models. The team plans to release code and weights soon.

Image source: StereoWorld: Transforming 2D Videos into Immersive 3D Reality with Geometry-Aware Tech