Astra: Navigating the Future with Hierarchical Multimodal Learning for Mobile Robots

What is Astra: Navigating the Future with Hierarchical Multimodal Learning for Mobile Robots

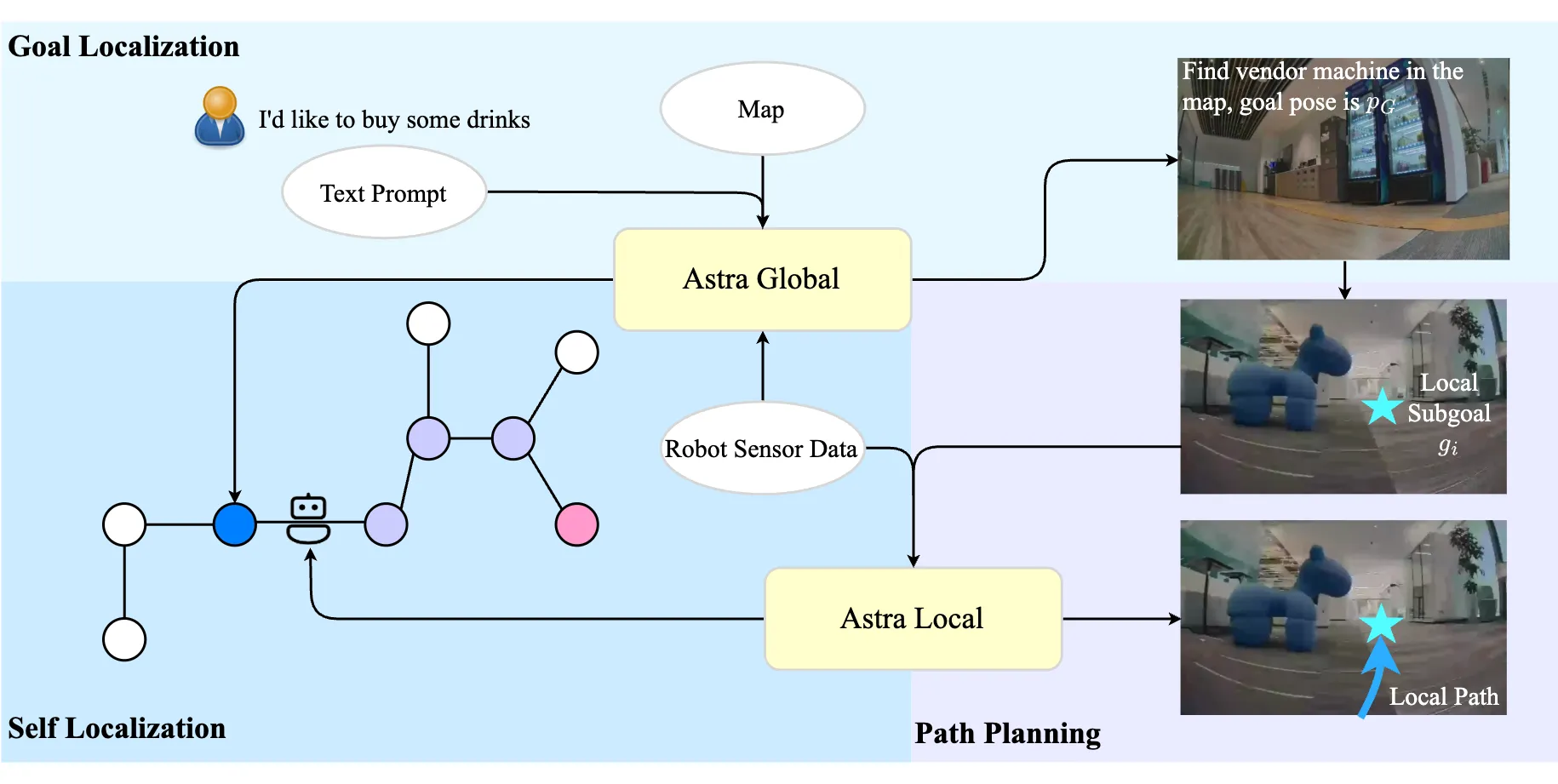

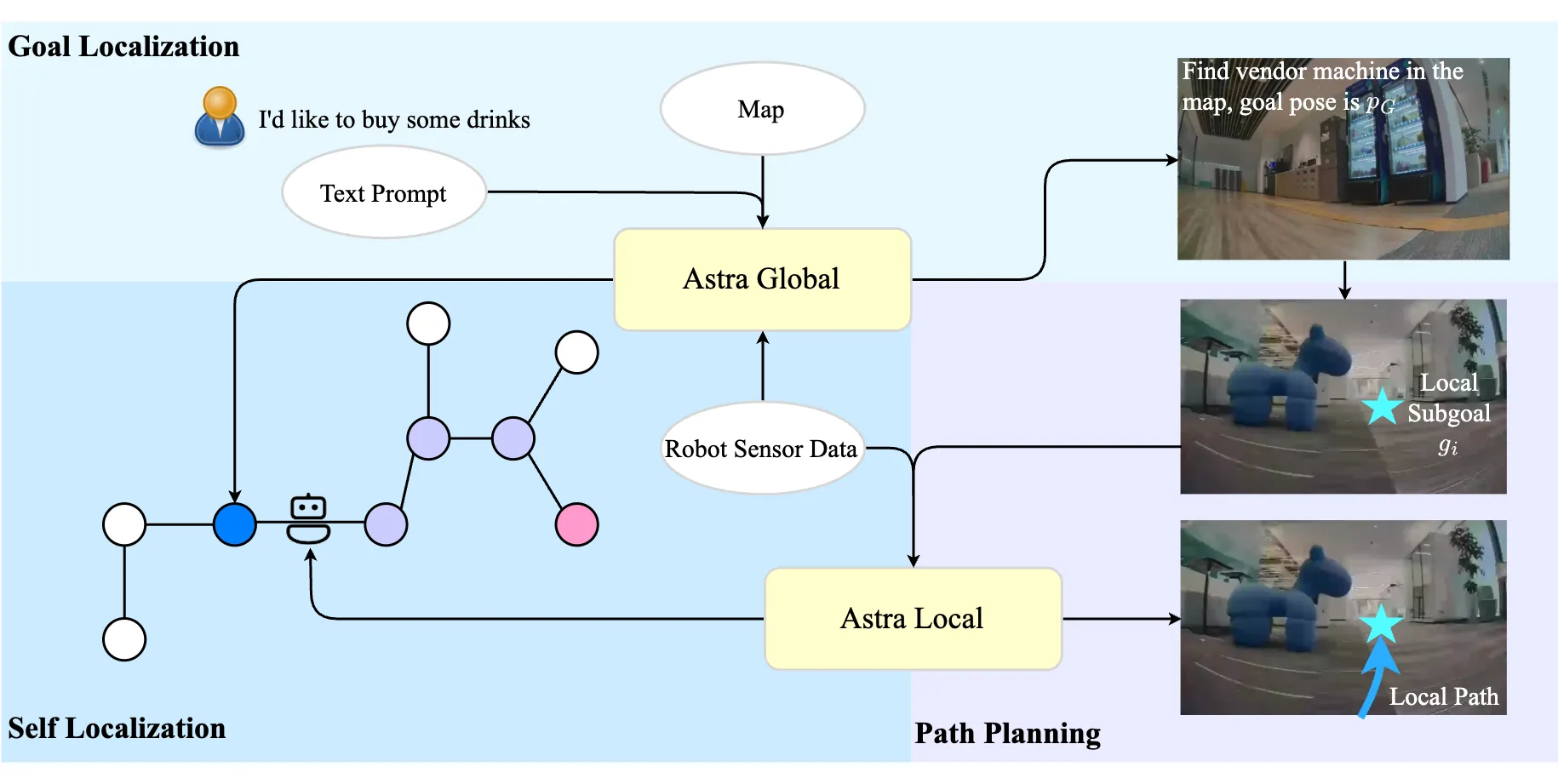

Astra is a two-part AI system that helps a mobile robot find its goal, know where it is, and plan a safe path indoors. It does this by reading camera images and simple text, then matching them to a smart map and nearby scenes, and finally giving the robot a path to follow.

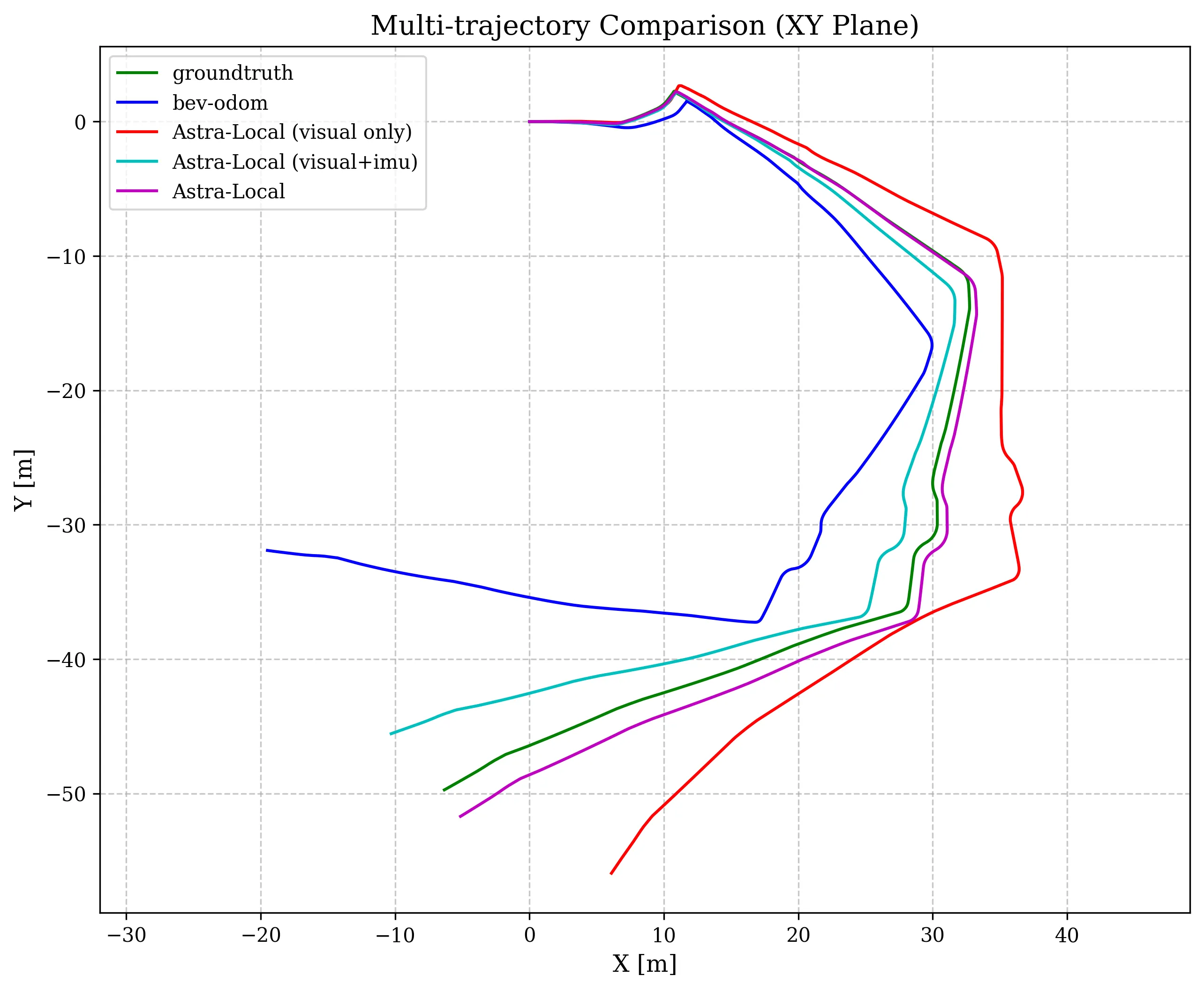

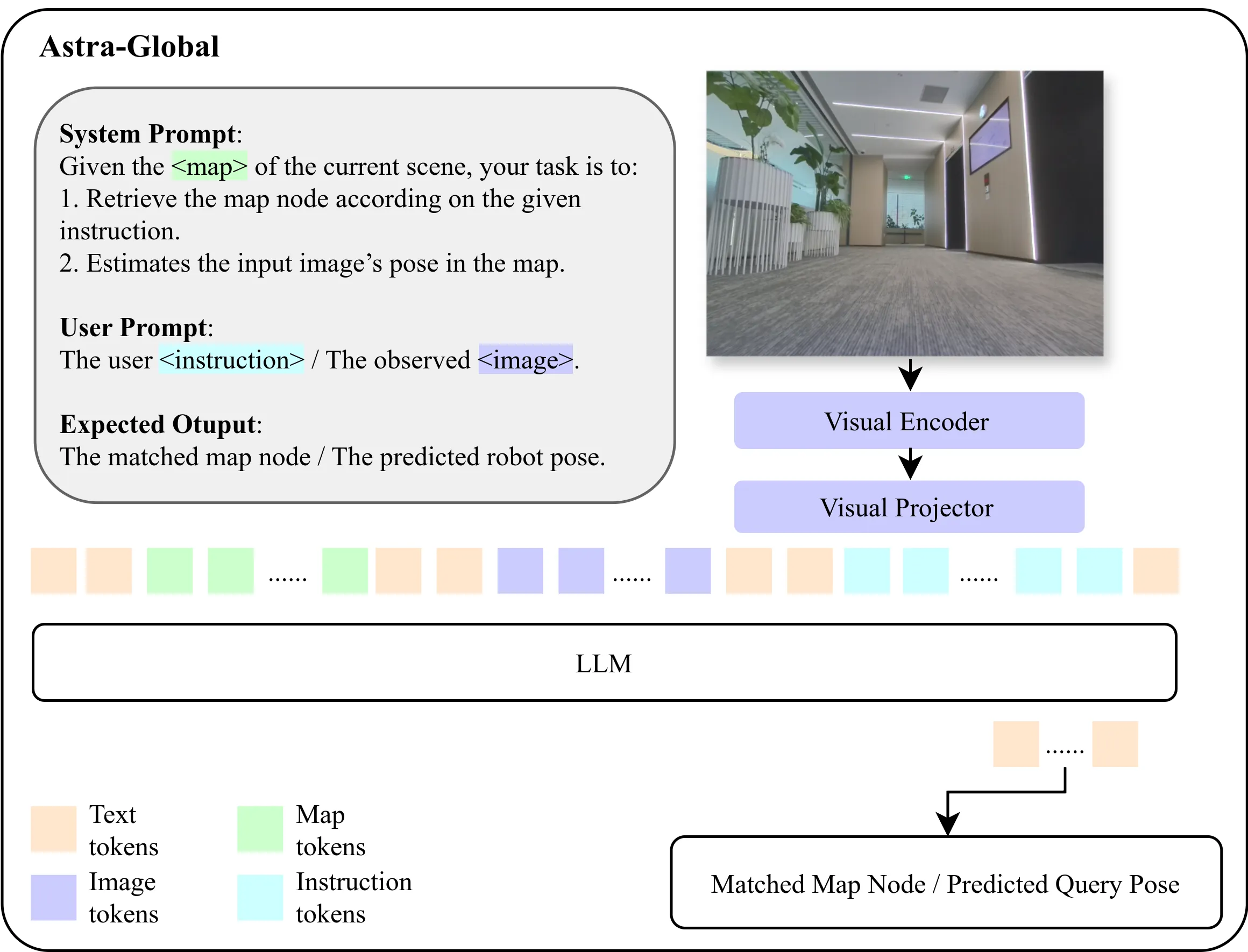

Astra includes two models that work together. Astra-Global finds places and goals using a mix of images and text, and Astra-Local plans short, safe moves while tracking the robot’s motion. This design has been tested on real robots across many types of indoor spaces.

See our Bytedance background here to understand the team behind this work.

Astra: Navigating the Future with Hierarchical Multimodal Learning for Mobile Robots Overview

Here’s a quick look at what the project offers.

| Item | Details |

|---|---|

| Type | Dual-model robot navigation system (Astra-Global + Astra-Local) |

| Creator | Bytedance Seed |

| Purpose | Help mobile robots find goals, localize themselves, and plan safe paths indoors |

| Main Features | Text and image queries, topological-semantic map, 4D spatial-temporal encoder, flow matching planner, masked ESDF loss, transformer-based odometry fusion |

| Inputs | Camera images, user text prompts, IMU data, wheel odometry |

| Outputs | Goal location, robot global pose, local path (short trajectory), relative pose between frames |

| Map | Hybrid topological-semantic global map with photos and text |

| Training | Supervised Finetuning (SFT) + Reinforcement Learning (RL) for Astra-Global; self-supervised pretraining for 4D encoder; supervised odometry head |

| Performance | High mission success rate on real in-house robots; strong results in new indoor spaces |

| Code/Repo | Public project site with paper and demo; code details not listed on the page |

| Project Page | https://astra-mobility.github.io/ |

Read More: Omnihuman 1.Com

Astra: Navigating the Future with Hierarchical Multimodal Learning for Mobile Robots Key Features

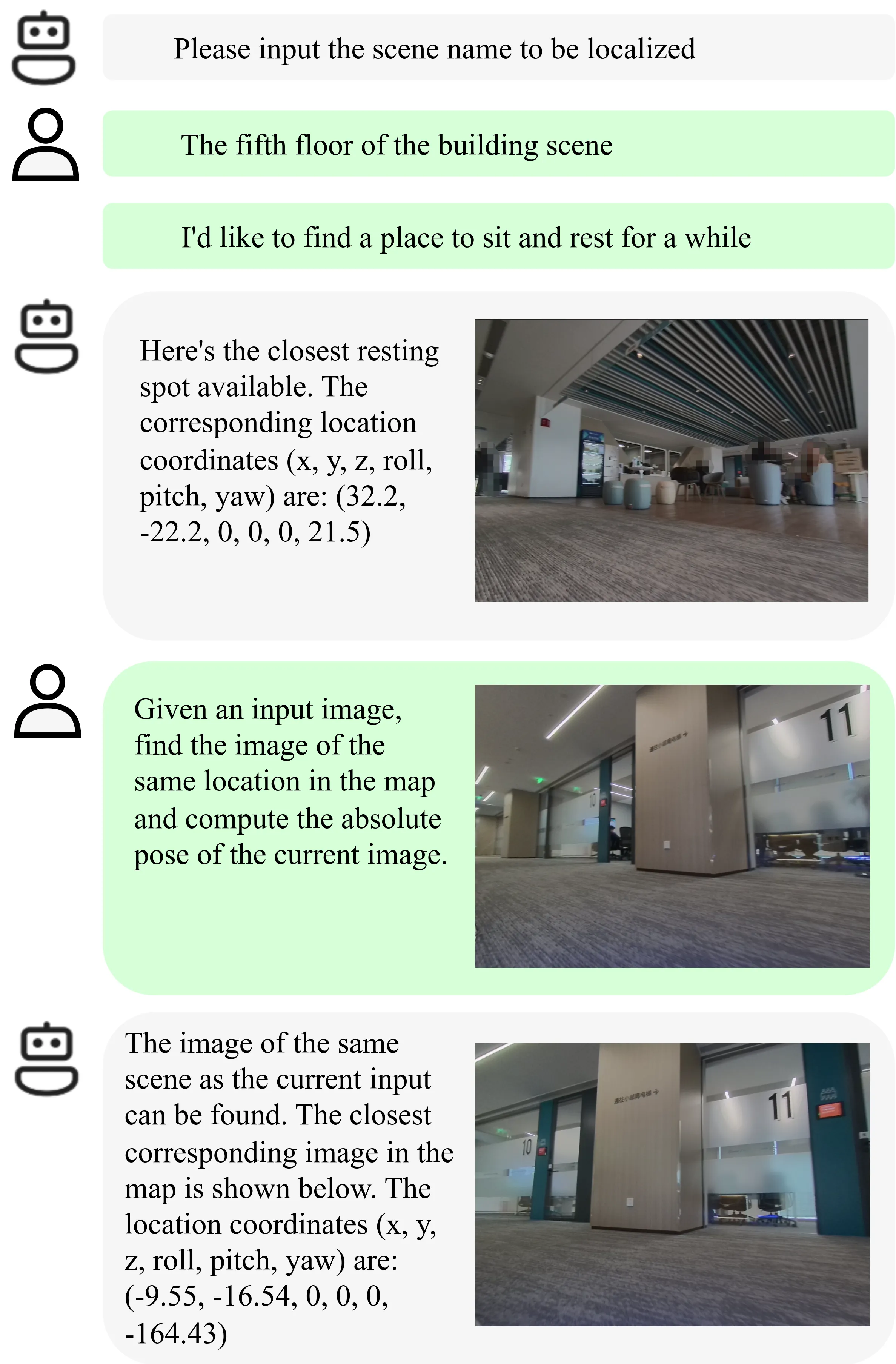

- Goal and self localization from text and images. Tell the robot “find a place to rest,” and it picks the best spot on the map. Show it a current camera view, and it figures out where it is.

-

Works across many indoor settings. The system uses natural signs and landmarks that people already understand, not special tags or QR codes.

-

Smart global map. The map blends photos and short text so the model can reason about places using both sight and words.

-

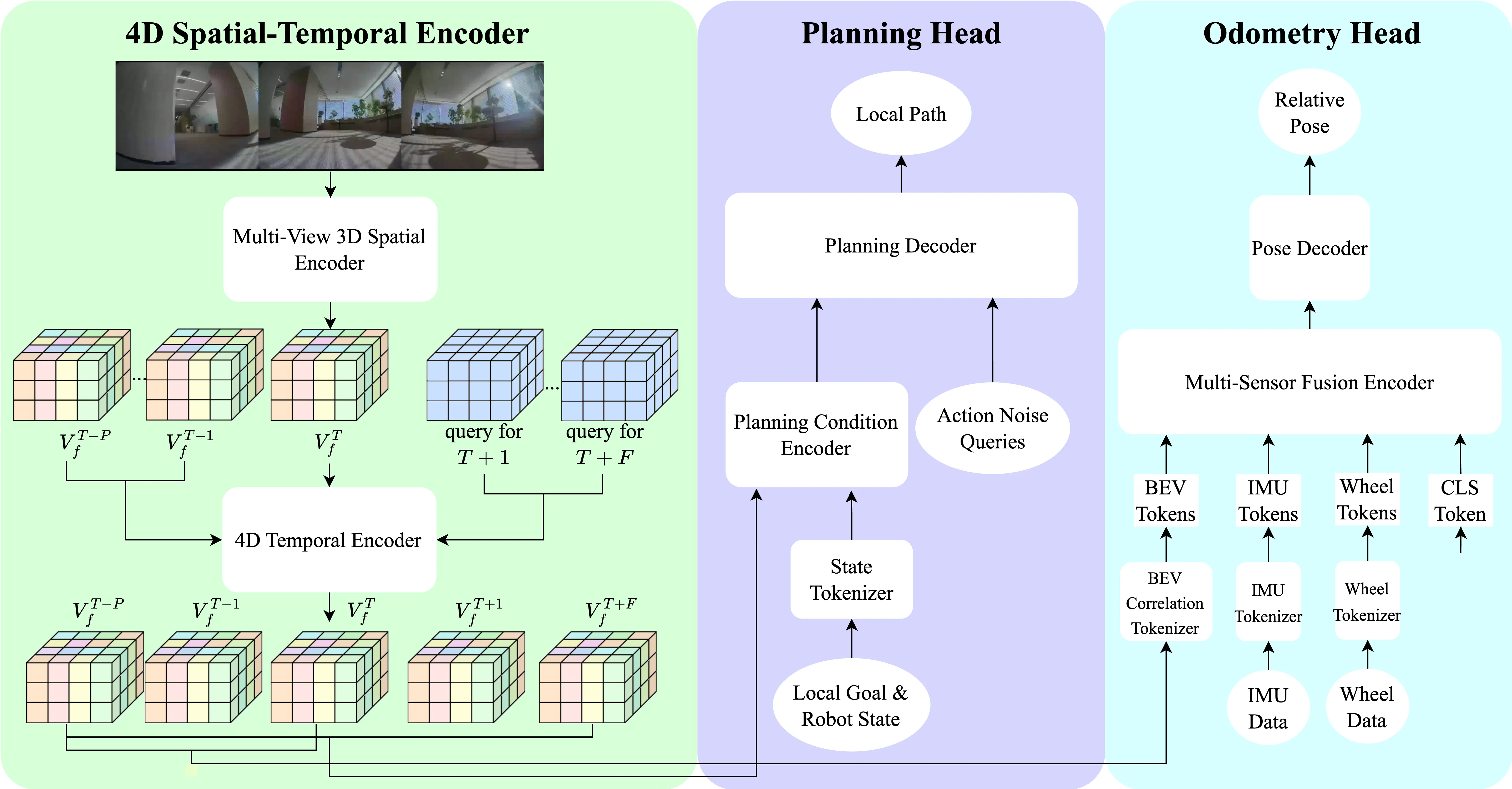

4D spatial-temporal encoder. Astra-Local builds strong features across space and time from multi-camera, multi-frame inputs.

-

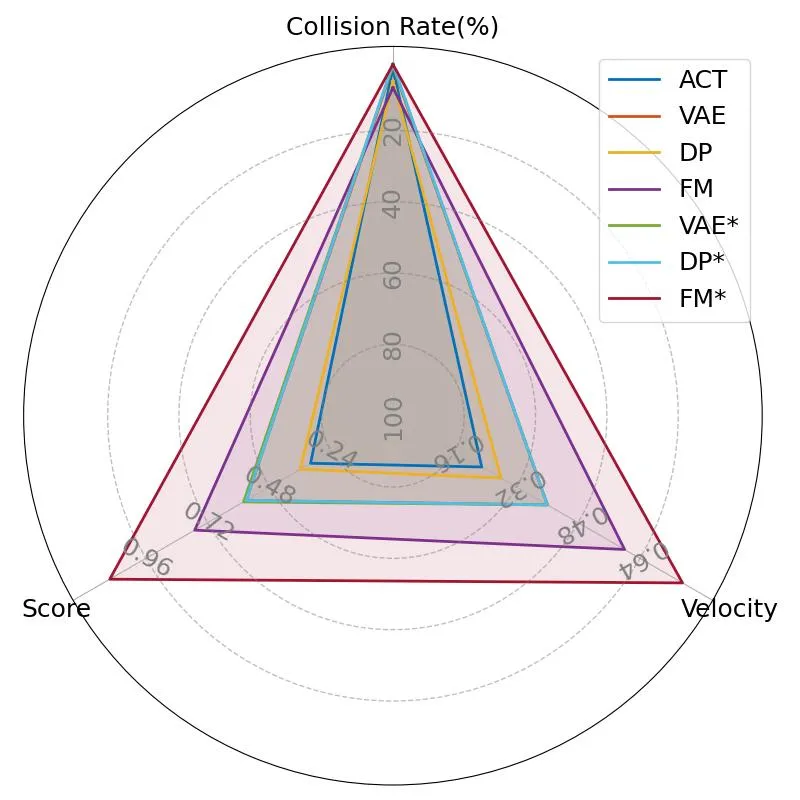

Safe and smooth planning. The planning head uses flow matching and a masked ESDF loss to cut down collisions and keep motion stable.

-

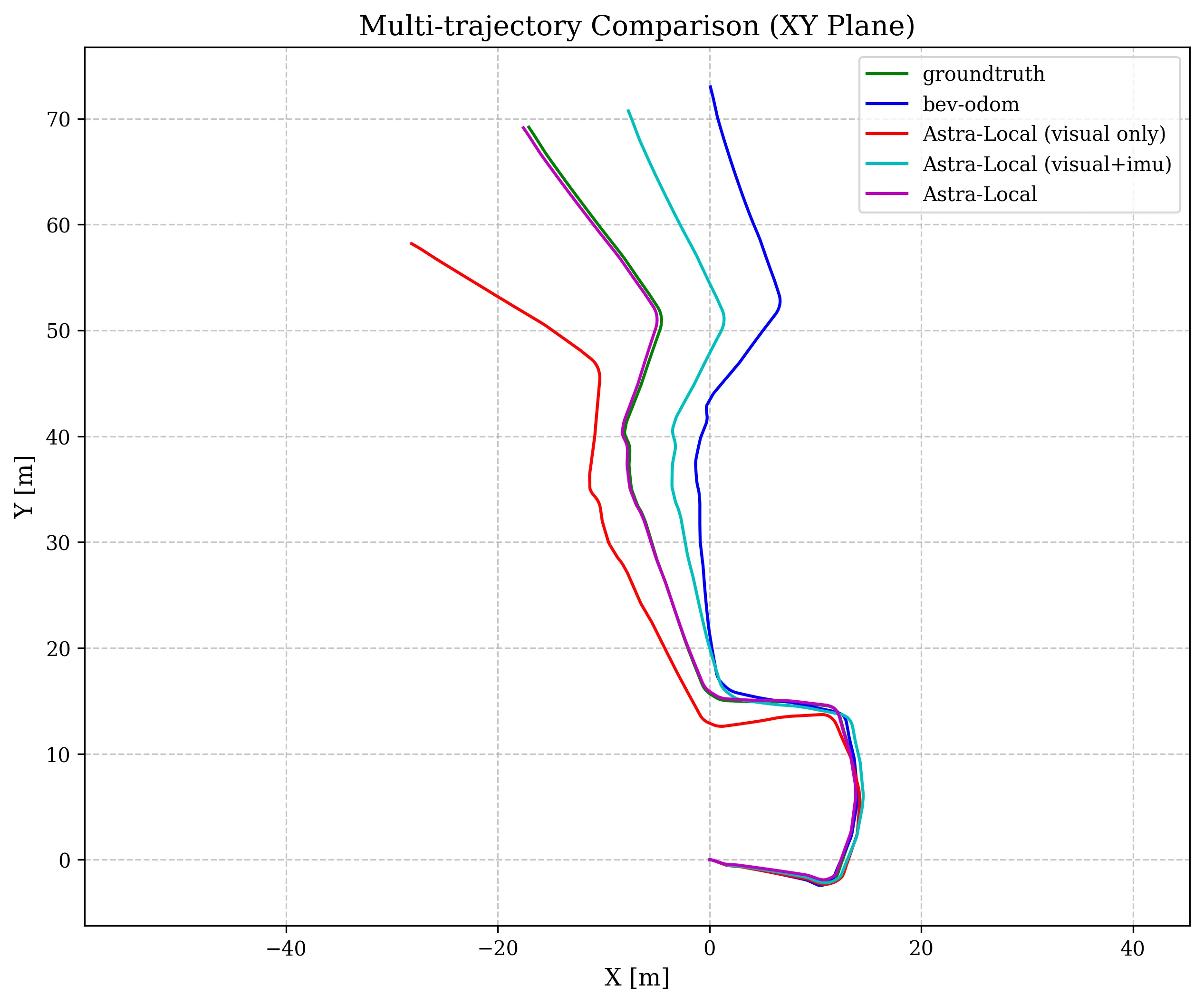

Reliable odometry. A transformer fuses 4D features, IMU, and wheel data to predict the robot’s motion step by step.

How Astra Works (Simple Walkthrough)

-

You give a goal using text, like “bring water to the lounge.” Astra-Global reads this and picks a goal pose on the global map.

-

The robot takes a photo of what it sees. Astra-Global matches it to landmarks in the map and fuses this with motion data to get the robot’s global pose.

-

Astra-Local receives a subgoal near the final goal. It plans a short local path that avoids bumps and tight spots.

-

The robot follows the path. At each step, the odometry head re-checks the robot’s motion from cameras, IMU, and wheels.

-

As the robot moves, Astra repeats the steps. It updates self location and refines the local path until the goal is reached.

The Technology Behind It

Astra-Global: a multimodal LLM for the map and queries. Images are encoded, aligned with text, and fed into a language model that reasons about both. The team used Supervised Finetuning and Reinforcement Learning, which helped it generalize and cut data needs.

Astra-Local: a 4D spatial-temporal encoder trained with self-supervised learning. It learns shared 3D features first, then learns to predict future states, giving strong 4D features for planning and odometry.

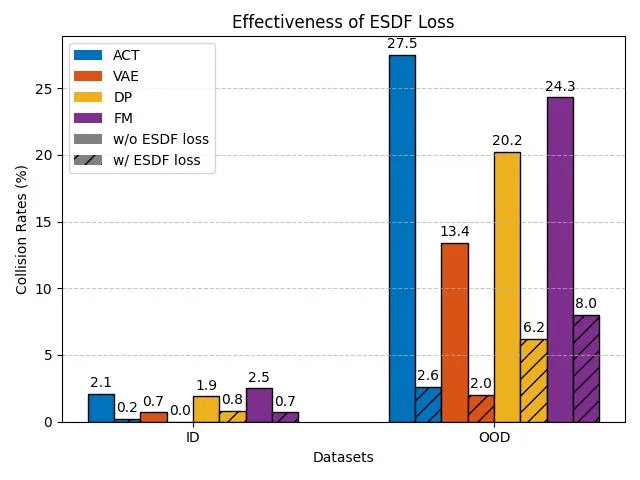

The planning head rebuilds motion from noise using flow matching and adds a masked ESDF loss to reduce collisions. Tests show big gains in safety and quality of paths.

The odometry head uses a transformer to combine video features with IMU and wheel data. Each sensor is tokenized per time step, then fed with learned embeddings to estimate the relative pose using a simple loss. This helps the robot keep track of how far and where it has moved.

Astra: Navigating the Future with Hierarchical Multimodal Learning for Mobile Robots Use Cases

-

Office delivery: bring items to meeting rooms, kitchens, or lounges by name.

-

Home help: guide a robot to the sofa, kitchen island, or bedroom using plain words.

-

Retail guide: lead customers to “winter coats area” or “customer service” with a photo or text prompt.

-

Campus and hotels: respond to “find the nearest elevator” or “take me to lobby”.

-

Warehouses: move between known zones with fewer special markers needed.

Learn more about us on the about page for site info and contact.

Performance & Showcases

Astra reports strong results across many indoor spaces. The system shows high end-to-end mission success rates on real robots, and it works well in places it has not seen before.

This YouTube video player highlights Astra running in practice. You can see how text and images guide the map search and how the local planner keeps motion safe in tight spots. The YouTube video player shows the full loop from goal to path.

Showcase 1 — YouTube video player

Getting Started: Where to Find More

-

Project page: The team shares the paper, figures, and a demo on the official site. Visit the page for updates on releases and datasets.

-

Code and install: The public page does not list install steps or a repo at this time. Keep an eye on the site for future setup guides.

-

Try it in a lab: If you run mobile robots, you can start by preparing a photo-and-text map of your site and logging camera, IMU, and wheel data. This will make it easier to test once code is shared.

FAQ

What problems does Astra solve inside buildings?

It helps a robot know where it is, find where to go from simple text, and plan a safe route. It reduces crashes and follows smooth paths. It works across many types of rooms and halls.

How does it find a goal from text?

Astra-Global reads your words and matches them to places and photos stored in a global map. It picks a goal pose that fits the text, like “rest area” or “kitchen.” This gives the robot a clear target.

How does it know its own position?

It looks at what the camera sees and finds nearby landmarks in the map. It also uses motion from IMU and wheels. The model fuses these to get a stable global pose.

What helps it avoid collisions?

The planner uses a masked ESDF loss that teaches the model to stay away from obstacles. It also uses flow matching to produce stable, smooth motions. Tests show much lower collision rates.

Can it handle new buildings?

Tests show it works well in new indoor spaces thanks to its training mix and map design. It relies on human-friendly landmarks, not special tags. This makes it easier to move to a new place.

Image source: https://astra-mobility.github.io/