MagicVideo-V2: The Future of High-Aesthetic Video Generation at Your Fingertips

What is MagicVideo-V2: The Future of High-Aesthetic Video Generation at Your Fingertips

MagicVideo‑V2 is an AI system that turns short text prompts into high‑quality video clips. You type a sentence, and it creates a moving scene with rich color, clean frames, and well‑timed motion.

It is built to help creators, brands, and storytellers make short videos fast. The focus is on pleasing style, steady motion, and strong details that look well made. If you are new to this topic, see our simple primer on text-to-video tools here: beginner’s text to video guide.

MagicVideo-V2: The Future of High-Aesthetic Video Generation at Your Fingertips Overview

Here is a quick look at the project in one place.

| Item | Details |

|---|---|

| Type | AI text‑to‑video model and public demo website |

| Purpose | Create short, high‑aesthetic videos from plain text prompts |

| Inputs | A short text description (prompt) |

| Output | Short video clips with stable motion and clean style |

| Main Features | Strong style quality, smooth subject motion, support for many art looks and moods |

| Who It’s For | Creators, marketers, educators, product teams, social media managers |

| Access | Public project page: magicvideov2.github.io |

| Organization | Research team with ties to creative AI work at ByteDance |

| Typical Use Time | Minutes from prompt to video (varies by queue and server load) |

| Pricing | Not listed on the project page at the time of writing |

MagicVideo-V2: The Future of High-Aesthetic Video Generation at Your Fingertips Key Features

- Text to video in one step. Type a short line; get a moving clip.

- High‑aesthetic look. Strong color, clean framing, and pleasing style.

- Flexible styles. From photo‑like scenes to art styles and cute drawings.

- Stable motion. Subjects move in a steady, believable way for short clips.

- Good detail. Faces, textures, and objects look tidy and well formed.

- Prompt friendly. It responds well to clear, simple prompts and easy style cues.

MagicVideo-V2: The Future of High-Aesthetic Video Generation at Your Fingertips Use Cases

- Social posts. Turn a product idea or trend into a shareable clip.

- Ads and promos. Make quick concept videos for campaigns.

- Education. Build short video explainers from lesson notes.

- Storytelling. Draft mood pieces, concept shots, or scene ideas.

- Art and design. Test color, shape, and motion studies in seconds.

- Prototyping. Show a feature idea to your team without a full shoot.

How It Works (In Simple Terms)

MagicVideo‑V2 learned from many video examples. It studied how images change from one frame to the next.

When you give it a prompt, it first plans the look and key objects. Then it draws frames one by one so the scene changes over time in a steady way.

It runs on strong servers in the cloud. That means you do not need a big computer to try it.

The Technology Behind It

- Large AI model. It was trained to match words to moving scenes.

- Frame planning. It keeps objects and style steady across frames.

- Style control. It can follow art styles, lighting, and camera hints in the prompt.

- Safety filters. It aims to avoid unsafe or blocked content.

For related background and company context, you can skim our short profile here: ByteDance overview.

Installation & Setup (Getting Started Fast)

There is no installer to run. You use the website.

Step‑by‑step:

- Open the project page: magicvideov2.github.io.

- Type a short, clear prompt. Keep it simple: subject, action, style.

- Submit your prompt. Wait for the clip to render.

- Download or replay your result.

Tips while you start:

- Keep prompts short at first. Add style tags like “oil painting”, “3D design”, or “photo in warm light.”

- Focus on one subject and one action. This helps motion look clean.

- Test two or three versions to find the look you like.

If you want a deeper breakdown focused on this model, here is a handy read: MagicVideo V2 review.

Performance & Showcases

Below are project demos that show what the system can do. Each video comes from the official “Text‑to‑Video Examples” set.

Showcase 1 — Text-to-Video Examples This clip is part of the Text-to-Video Examples. It shows how short lines can become rich moving scenes with steady action.

Showcase 2 — Text-to-Video Examples Another sample from the Text-to-Video Examples, with a fresh prompt and a different mood. Watch how style and motion stay clean across frames.

Showcase 3 — Text-to-Video Examples From the Text-to-Video Examples, this one focuses on a clear subject and a simple action. It’s a good test of motion and detail.

Showcase 4 — Text-to-Video Examples Part of the Text-to-Video Examples set, this clip shows how the model handles bright colors and object movement.

Showcase 5 — Text-to-Video Examples This Text-to-Video Examples clip highlights neat textures and tidy framing. The subject stays steady as the scene plays out.

Showcase 6 — Text-to-Video Examples From the Text-to-Video Examples, this sample blends a creative prompt with controlled motion. It keeps style cues consistent from start to end.

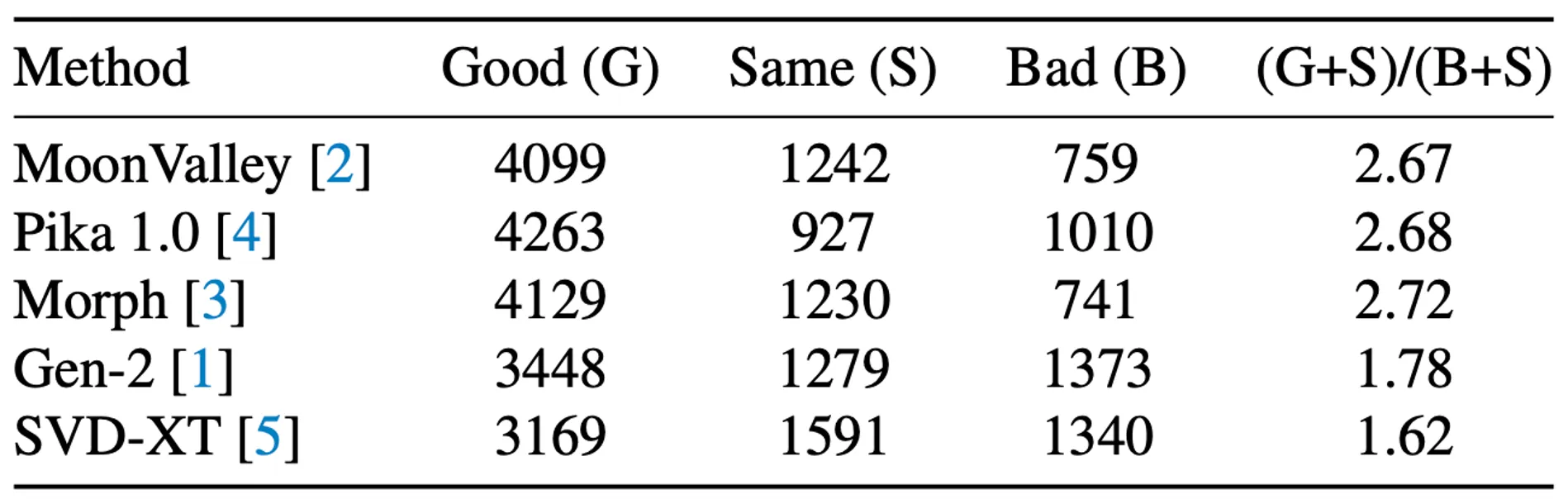

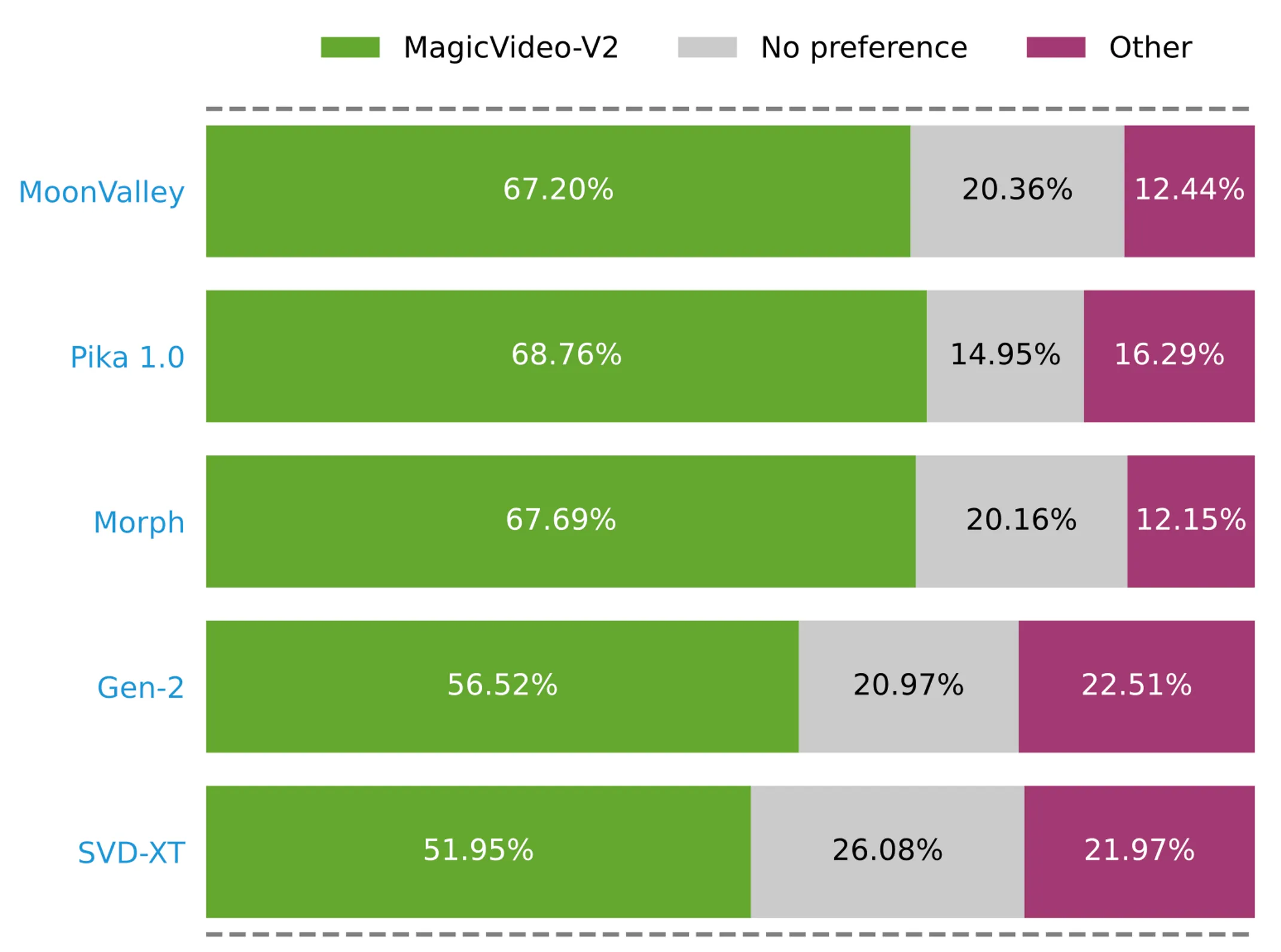

Quality Highlights and Human Feedback

The team shares results that look clean and well timed. You can see steady motion, clear subjects, and smooth cuts.

People tend to prefer clips that stick to the prompt and hold style from frame to frame. The model aims to do both well.

Writing Great Prompts

- Structure your prompt. Use this order: subject, action, place or background, style tag (for example: “oil painting” or “3D design”).

- Be clear and short. Two short sentences are better than one long one.

- Add light and camera hints if needed. For example: “warm evening light”, “medium shot”.

If you want more help with ideas and examples, check our friendly walkthrough: how text to video works.

Creative Tips for Better Results

- One main subject works best. Too many moving parts can cause messy motion.

- Use simple actions. Walk, turn, smile, pour, and wave are safe starter verbs.

- Try style sets. Photo, oil paint, watercolor, 3D toy, anime, or storybook can change the mood fast.

- Iterate. Small edits to the prompt can raise quality a lot.

Where This Fits in Your Team

- Content teams can draft rough cuts for reviews in minutes.

- Product and growth teams can test ad hooks quickly before a full shoot.

- Teachers can build short clips to explain ideas to students.

FAQ

How long are the videos?

Most samples are short clips. Length can vary based on the demo and server settings.

Can I control the style?

Yes. Add clear style words in your prompt, like “oil painting” or “3D design.” Keep it simple and direct.

What if my clip looks off?

Try a shorter prompt with one subject and one action. Then add one or two style words.

Do I need a strong computer?

No. It runs in the cloud. You only need a web browser and an internet connection.

Is there a way to learn more about this model?

Yes. We made an easy page you can skim for extra context: read more about MagicVideo V2.

The Bottom Line

MagicVideo‑V2 turns short text into clean, stylish video clips. It is fast to try, easy to learn, and great for quick ideas or content drafts.

If you work in media or creative tech and want a quick refresher on the company context, see our short note: ByteDance background.

Image source: MagicVideo-V2: The Future of High-Aesthetic Video Generation at Your Fingertips